The World’s Best Companies Use Litmos

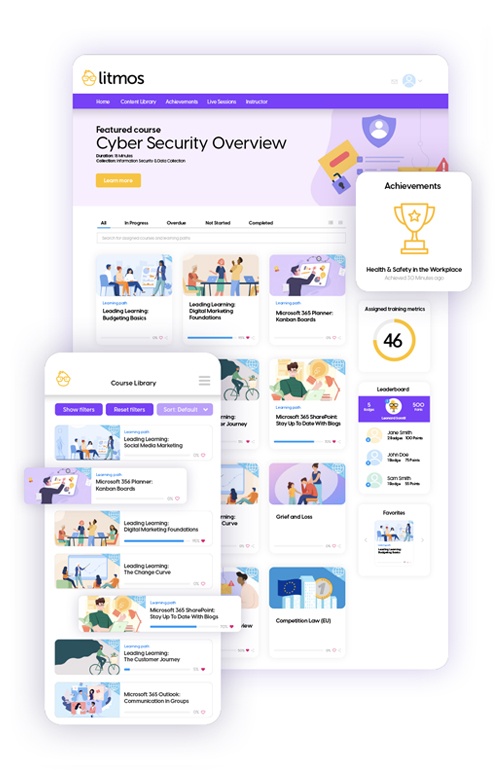

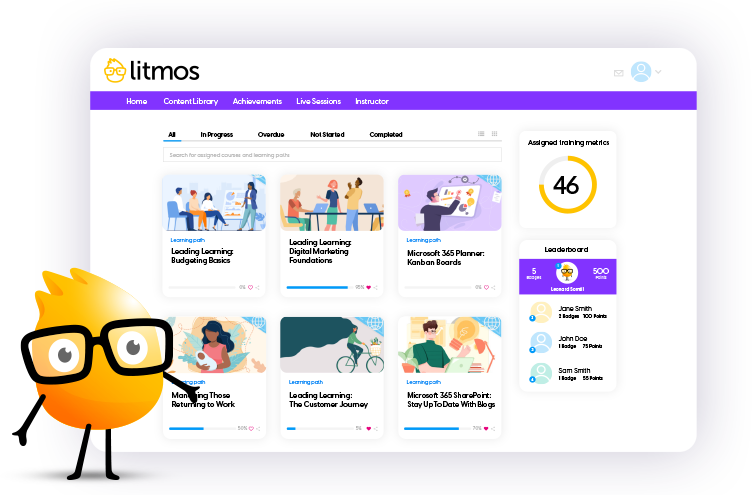

Online Learning for Every Segment of Your Business

Why Choose Litmos?

Quick deployment and integration

Deploy in minutes and easily integrate with the rest of your ecosystem. Choose from 30+ out-of-the-box connectors or build your own using open APIs.

Serious security

Trust that all data is encrypted and stored behind a firewall, transferred over HTTPS, keyed with a private certificate, and GDPR compliant.

Off-the-shelf content

Always current, always relevant – offer ready-to-access courses in compliance, leadership and management, communication and social skills, and much more.

Robust reporting

Measure and influence performance; and track course completions, test averages, content popularity, and more with built-in reporting and analytics.

Universal accessibility

Make training accessible from anywhere 24/7 on or offline, and to learners with disabilities. With 35+ preconfigured languages, users can select their language of choice, eliminating barriers to learning.

Built-in content authoring

Create dynamic SCORM content within the LMS. Designed to support everyone from the novice trainer to the expert instructional designer, this eliminates the need for external content creation applications.

The World’s Top Companies Trust Litmos

0 +

Million

Users taught

Over

0 Billion

Courses completed

0 Industry

Awards

and counting…

0

Countries